The Digital Health Department (DéSaN) of Rouen University Hospital is a multidisciplinary team in medical informatics and medical documentation. DéSaN develops and maintains three major health knowledge sources: CISMeF (Catalogue and Index of French-Language Medical Sites - over 130,000 full-text resources), LiSSa (Scientific Literature in Health - 1.2 million metadata entries), and HeTOP (Health Terminology/Ontology Portal - 109 health terminologies and ontologies in 55 languages).

Technical/scientific Challenge:

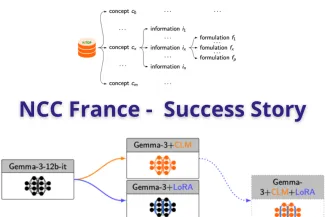

Generative Large Language Models (LLMs) lack specialized knowledge in the French medical domain. The project aimed to inject millions of medical concepts from the HeTOP, CISMeF, and LiSSa databases into an LLM while preserving its base generative capabilities. Two technical approaches were tested: Continual Pre-training via Causal Language Modeling (CLM) and Fine-tuning via Low-Rank Adaptation (LoRA). The main challenge was to determine which approach allowed the most effective knowledge injection while maintaining scalability and the model’s general-purpose abilities.

Solution:

CRIANN supported DéSaN throughout 2025 with 14 technical meetings, providing GPU infrastructure, distributed LLM fine-tuning support, training technique implementation, and resource monitoring. The team configured a multi-GPU environment (4 × Nvidia H200 GPUs, 140 GB VRAM each) on the Austral supercomputer. Two training approaches were tested using corpora built from medical concepts: CLM, which required 20 hours of training and 406 GB of memory, and LoRA fine-tuning, which required only 2 hours of training and 110 GB of memory. Both approaches successfully injected medical knowledge while preserving the model’s generative capabilities, with LoRA proving more efficient and scalable.

Business impact:

The project successfully enriched the LLM with French medical knowledge, enabling it to accurately retrieve domain-specific information previously unknown to the base model. LoRA fine-tuning demonstrated a realistic and scalable approach for large datasets, maintaining the model’s general-purpose functionality while specializing in medical tasks. This work enhances the potential of AI-assisted medical applications and provides a framework for future research, including hybrid methods such as Retrieval-Augmented Generation (RAG) and integration with symbolic AI and ontological data. The results were presented at JCAD 2025 in Lille on September 17, 2025, promoting scientific dissemination and collaboration.

Benefits:

- Effective injection of millions of medical concepts into a generative LLM

- Preservation of the model’s general-purpose capabilities while specializing in medical knowledge

- Efficient training using LoRA: 2 hours with only 110 GB memory, compared to 20 hours and 406 GB for CLM

- Scalable approach suitable for large medical corpora

- Contribution to AI-assisted medical research and future hybrid LLM developments